There have been headlines recently about AI companies and defence contracts — about which model a government chooses, which company holds the deal, and what that means for the direction of the technology. The details are contested and the politics are loud.

What the noise does illustrate is something worth paying attention to: the model landscape is not stable, the commercial relationships built around it are not stable, and any organisation that has built a dependency on today's arrangement may find itself reorganising sooner than expected.

The Scoreboard

There is a platform called the Arena Leaderboard — found at [arena. ai/leaderboard](https://arena.ai/leaderboard) — that ranks AI models based on millions of real, blind, head-to-head comparisons by human users. It originated from research at UC Berkeley's LMSYS group and has become the most widely cited independent benchmark in the field. No lab sets its own score. The ranking reflects genuine preference across a wide range of tasks.

The Arena runs separate leaderboards for different functions — text, coding, vision, document handling, and more. A model that leads in one area may rank differently in another. As of the date of writing, in the text leaderboard, the top three models are separated by four Elo points (an Elo score is a relative performance rating borrowed from competitive chess — the higher the score, the more consistently a model wins head-to-head comparisons). Four points. The top positions are held by Claude, Gemini, and Grok — in that order, right now — but the margin is negligible. In the coding leaderboard, Claude currently leads convincingly. In other categories, the order shifts again.

This leaderboard updates continuously. The rankings can shift week to week — sometimes faster — depending on what the labs release and how quickly the market responds. Any strategy built around a specific model's current position on that scoreboard is a strategy with a limited shelf life.

Four Layers That Many People Conflate

Many conversations about "choosing an AI" collapse four distinct things into one. Understanding how they relate to each other changes the decisions you make at every level.

The company is the organisation that builds and owns the underlying model. For example, Anthropic owns Claude, OpenAI owns GPT, Google owns Gemini, xAI owns Grok, and Meta owns Llama — this list expands as new entrants emerge. Each company releases successive versions of their models — Claude Opus, Claude Sonnet; GPT-4o, GPT-5 — improving capability with each iteration. The company behind the model matters for understanding accountability, governance posture, and where the technology is heading.

The model is the intelligence itself — the reasoning engine (for example, Claude Opus, GPT-5, Gemini Pro). More on this later.

The product is the commercial interface built on top of the model. ChatGPT is a product. Claude.ai is a product. Microsoft Copilot is a product. These are the chat windows, the subscription plans, the branded experiences most people interact with day to day. They are wrappers around the model.

The system is how your organisation deploys the intelligence — the workflows, integrations, automations, and processes that plug into it. This is where AI becomes operational rather than experimental.

The table below maps the major players across all four layers as of the date of writing. The landscape changes frequently — treat this as a snapshot, not a fixed register.

| Company | Model Family | Consumer Product | Other Access Points |

|---|---|---|---|

| Anthropic | Claude (Opus, Sonnet, Haiku) | Claude.ai | API, third-party tools |

| OpenAI | GPT (GPT-4o, GPT-5 series) | ChatGPT | API, Microsoft Copilot |

| Gemini (Pro, Flash series) | Gemini | API, Google Workspace | |

| xAI | Grok | Grok.com | API |

| Meta | Llama (open source) | None | API, self-hosted, embedded in third-party tools |

| Mistral (France) | Mistral, Mixtral | Le Chat | API, self-hosted |

| Amazon (AWS) | Nova, Titan | None | AWS Bedrock API |

| DeepSeek (China) | DeepSeek R1 | DeepSeek.com | API |

| Perplexity AI | Sonar model family; R1 1776 | Perplexity.ai | Sonar API, R1 1776 open source via Hugging Face |

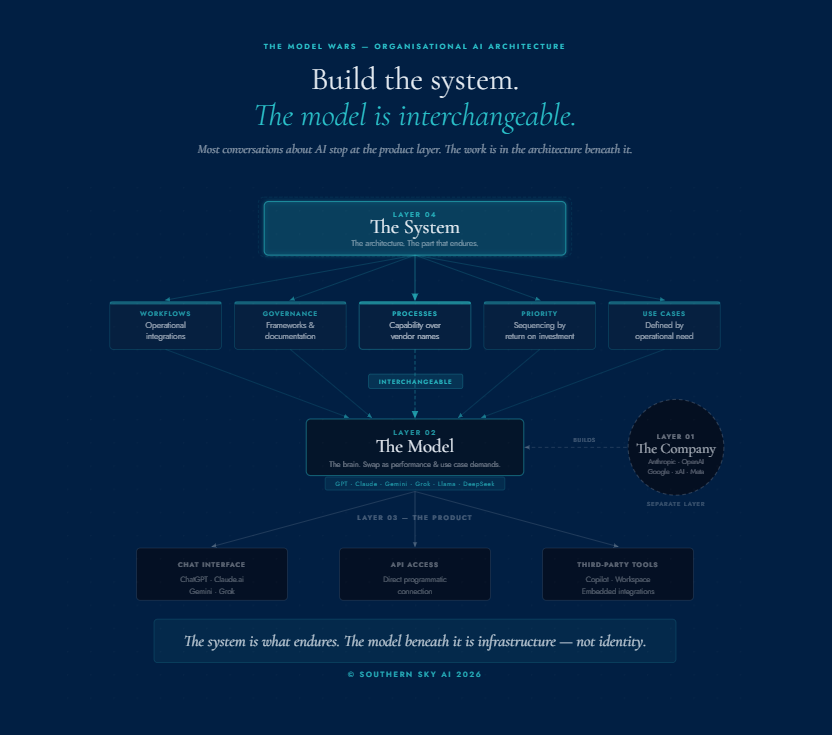

The Model Wars - Organisational AI Architecture

Build the system.

The model is interchangeable.

Most conversations about AI stop at the product layer. The work is in the architecture beneath it.

Many organisations are operating at the product layer and calling it AI adoption. The real work is at the system layer.

The system layer is the architecture that defines how your organisation taps into the intelligence — what you will use it for, why, and in what order of priority based on return. It specifies the connections, the workflows, the governance, and the decisions about which capabilities matter most to your operations. The models and products that sit beneath that architecture can be swapped in and out as better options emerge or use-case requirements change. The architecture itself is what endures.

What the Model Is

A large language model is a brain — a reasoning engine trained on a volume of human knowledge that exceeds what any individual could read in a lifetime. It holds language, logic, context, and the capacity to reason across all of it simultaneously. Each successive version of a model — each new release from Anthropic, OpenAI, Google — represents that brain getting more capable.

Think of a food truck. The model is the kitchen — everything that reaches you comes from it, but there are several ways to access what it produces.

The chat interface is the service window at the front. You place your order, you receive it directly. Accessible, immediate, designed for individual use.

The API is the side door — a direct programmatic connection that lets your own software talk to the kitchen without a human at the counter. Your systems send a request; the response comes back invisibly, inside a workflow you've designed.

Third-party tools are the walkway between the kitchen and the restaurant around the corner — a vendor with their own setup, their own experience, serving you the same intelligence through a different door entirely.

This is why the question "which product should we choose?" only gets you so far. The product is one route to the kitchen. The more useful question is: how do we connect to this intelligence in a way that serves our operations, and how do we build that connection so it doesn't break every time the model landscape shifts?

The Handheld GPS Problem

Think about a handheld GPS. It works. It gives you real, reliable navigation information. Nobody questions its value — it earns its place aboard.

But nobody would design a vessel's navigation infrastructure around one.

It is a personal tool. The right instrument for a particular use case. It is categorically different from the integrated navigation systems a vessel runs on.

A personal subscription to an AI chat product — ChatGPT, Claude.ai, Copilot, Gemini — is the handheld GPS of AI. It is a valuable personal productivity tool. It builds fluency. It lets individuals test the capability, form their own views, understand the interface.

That is worth doing. Encourage it.

But buying thirty subscriptions to a chat interface and distributing them across your team is not an AI adoption strategy. It is thirty people with handheld GPSs and no agreed navigation architecture.

The distinction matters because the two things require different thinking, different governance, different investment decisions — and different conversations entirely.

The Product Layer Is Not Fixed Either

Many of the standalone AI productivity tools that exist today are built on the same underlying models the major labs provide directly. The capability those tools offer is, in many cases, a layer of packaging and workflow design on top of the same intelligence.

The models themselves are expanding what they do natively. Capabilities that currently exist as separate products may, over time, be absorbed into the model layer directly. That is the direction the technology is moving.

This creates a specific risk for organisations that have made deep commitments to particular product ecosystems — especially on long-term contracts — without understanding that the product layer is not structurally stable.

This is precisely why the system layer matters. When the products beneath your architecture shift — and they will — a well-designed system absorbs that change. You swap out the lead. The architecture holds.

For what it's worth: I sign up to AI products on monthly subscriptions only. Twelve months is a long time in this landscape, and flexibility has real value. Lock-in is a choice. It is worth making deliberately.

The Design Principle: Model Agnosticism

Model agnosticism means building AI systems that treat the intelligence layer as interchangeable infrastructure rather than fixed architecture.

In practice this means:

Your documented processes describe what the AI does in your operations — not which specific model or product does it.

Your governance frameworks refer to capability requirements, not vendor names.

Your workflows are designed so that the model underneath them can be upgraded, replaced, or adjusted without rebuilding the entire system.

This is structural discipline — the same discipline that applies to any critical infrastructure decision. You don't build a vessel's systems around a single supplier and hope that supplier's product line never changes.

Model agnosticism is a design principle for building AI systems that last.

Where to Start

Personal AI tools are valuable. The fluency that comes from regular, curious use of a chat product is worth developing. It's how people develop an intuitive sense of what the technology can and can't do.

But there is a difference between personal fluency and organisational infrastructure. Both matter. They are different things. Skipping from personal tool to organisation-wide deployment without addressing the layer in between is where most AI adoption runs into trouble. That layer is what the Compass AI Blueprint is built to address. And for maritime professionals wanting to explore Claude specifically, I have written a practical guide to making the move.

The organisations that will be well-positioned in two or three years are not necessarily the ones that chose the right model in 2026.

They are the ones that understood how the layers work, built their systems with appropriate flexibility, and made governance decisions that can survive a leaderboard shuffle.

The only constant in this landscape is change.

Build accordingly.

Founder & Principal Digital Navigator, Southern Sky AI

The Arena Leaderboard is available at arena.ai/leaderboard. Rankings are based on crowdsourced blind comparisons and update continuously. All leaderboard data cited reflects the position at the time of writing.

Ready to think through your organisation's AI architecture? The Compass AI Blueprint maps your current position and charts a structured path forward. Request the Engagement Guide.